228 : What is Google Worth?

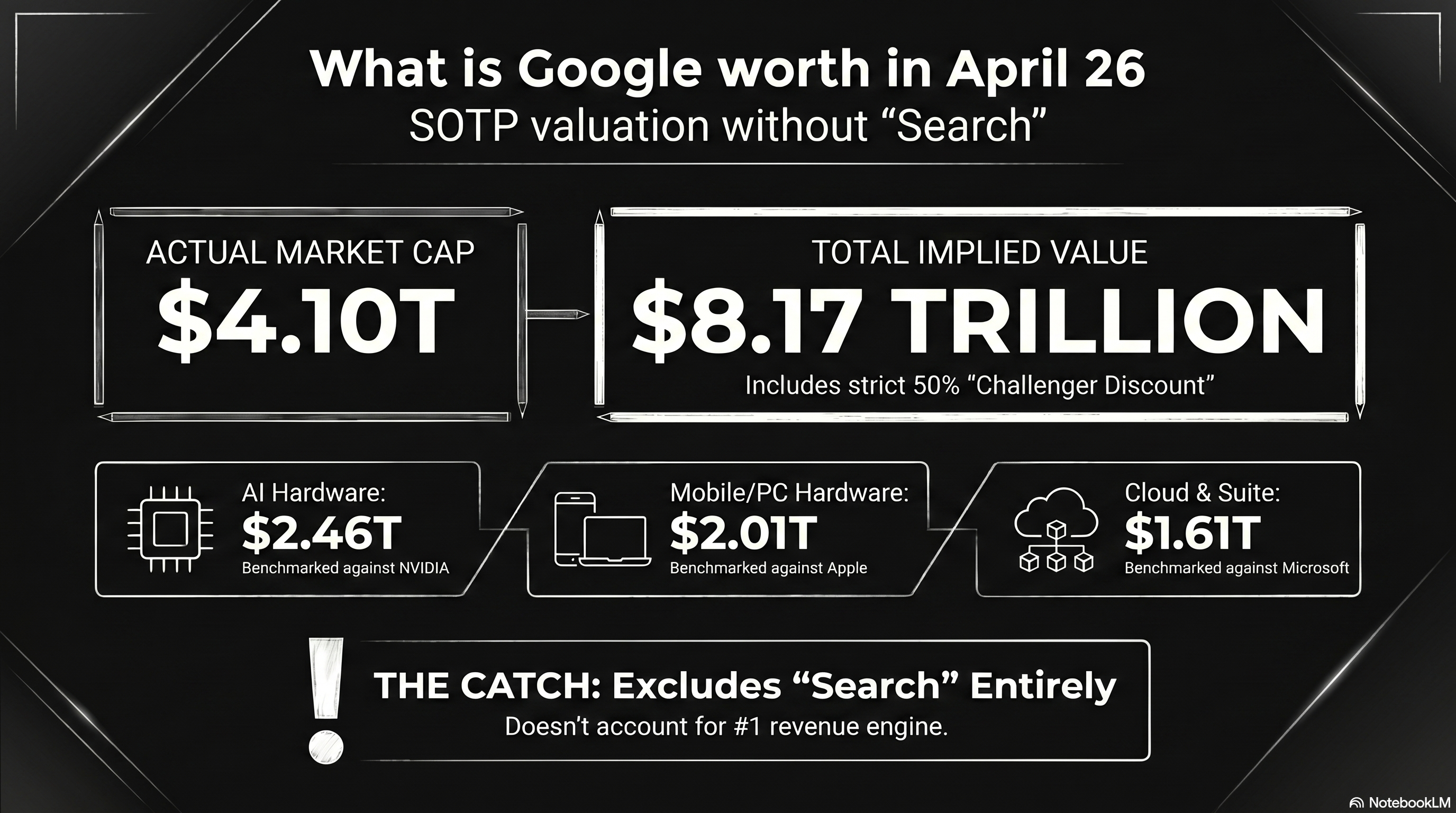

This market cap prediction model uses a Sum-of-the-Parts (SOTP) valuation approach. It benchmarks Google's individual business units against the market leaders and applies a 50% "Challenger Discount" to account for the market leader's secondary moats (e.g., Amazon’s retail logistics or Tesla’s manufacturing scale). 1 2

Based on 2026 data, here is the breakdown of Google's implied valuation:

Google SOTP Valuation Model (2026 Projection)

| Segment | Market Leader | Leader Market Cap | Google "Implied" Value (50% Discount) | Notes on Leader's Moat |

|---|---|---|---|---|

| AI Hardware | NVIDIA | 4.92 Trillion USD | 2.46 Trillion USD | CUDA ecosystem & GPU dominance. |

| Hardware (Mobile/PC) | Apple | 4.02 Trillion USD | 2.01 Trillion USD | Premium hardware & ecosystem lock-in. |

| Suite, Browser, Cloud | Microsoft | 3.21 Trillion USD | 1.61 Trillion USD | Enterprise sales & Windows integration. |

| Streaming (YouTube) | Netflix | 350 Billion USD* | 175 Billion USD | Content library & brand loyalty. |

| Cloud Infrastructure | Amazon | 2.58 Trillion USD | 1.29 Trillion USD | Logistics & e-commerce integration. |

| Autonomous (Waymo) | Tesla | 1.25 Trillion USD | 625 Billion USD | Mass production & Supercharger network. |

| TOTAL IMPLIED CAP | 8.17 Trillion USD |

*Netflix market cap is an estimate based on 2026 growth trends; others are current market figures.

Analysis of the Model

-

The "Discount" Paradox: While we applied a 50% discount for Google being #2, the model yields an implied market cap of 8.17 Trillion USD. This is significantly higher than Google's actual 2026 market cap (approx. 4.1 Trillion USD). 3

-

Market Realities: In several categories, the market currently discounts Google even further than 50%. For example, while Waymo is technologically ahead of Tesla in L4 autonomy, the market values Tesla’s "Autonomy" potential much higher due to its fleet of millions of cars on the road. 4

-

The "Search" Elephant: This model focuses on specific comparisons, but it excludes Google’s primary engine: Search. Google is the undisputed #1 in Search, which generates the massive cash flow (60 Billion USD+ per quarter) that funds "challenger" bets like Waymo and TPU development. 5

By looking at Google this way, you can see that if Google were broken up, the individual pieces might actually be worth more than the whole company is today—a concept known as a "conglomerate discount." Since the biggest component of this valuation model is AI hardware, a deeper look into how Google's hardware (TPU 8) is specifically trying to close the gap with NVIDIA is essential. 6 7

Google TPU 8 Family versus NVIDIA Vera Rubin Platform

The computational infrastructure landscape of 2026 is defined by a fundamental pivot away from general-purpose acceleration toward hyper-specialized, workload-aware silicon architectures. As the industry enters the "agentic era"—characterized by autonomous AI agents capable of multi-step reasoning, planning, and tool execution—the demands placed on hardware have bifurcated. 1 8 2

Model training now requires unprecedented scale-out throughput and resilient synchronization across hundreds of thousands of nodes, while model inference demands ultra-low latency, massive memory bandwidth, and the ability to host multi-trillion parameter weights with minimal energy overhead.

The recent unveiling of Google’s eighth-generation Tensor Processing Units (TPU 8t and TPU 8i) at Google Cloud Next 2026, alongside the maturation of NVIDIA’s Vera Rubin platform, presents a critical choice for AI developers. 6 9 1

The Architectural Divergence

The central theme of the 2026 hardware cycle is the end of the "one-size-fits-all" accelerator. For the first time, Google has explicitly split its TPU roadmap into two distinct products: the TPU 8t (Sunfish), optimized for massive-scale pre-training, and the TPU 8i (Zebrafish), engineered for high-concurrency reasoning and sampling. 10 11 12

This specialization is a response to the "inference inflection point," where the hardware requirements for serving millions of active agents have diverged sharply from the requirements for building the underlying frontier models. 3 13

NVIDIA, conversely, continues to leverage its unified architectural approach with the Vera Rubin platform. While NVIDIA has introduced specialized features for inference—such as the Transformer Engine and hardware-accelerated compression for FP4—the Rubin R100 GPU remains a converged powerhouse capable of handling both halves of the AI lifecycle within a single, highly flexible substrate. 14 15 16

Google TPU 8t: The Training Powerhouse

The TPU 8t represents Google’s eighth iteration of its custom AI silicon, developed in collaboration with Broadcom. 10 17 It is designed to minimize the development cycle of frontier models from months to weeks by maximizing "goodput"—the ratio of productive compute time to total uptime. 18 19

- SparseCore Advantage: Unlike general-purpose GPUs that can struggle with irregular memory access patterns, the TPU 8t features the SparseCore. This handles embedding lookups and data-dependent "all-gather" operations, offloading these from the primary Matrix Multiply Units (MXU). 6 18

- VPU/MXU Overlap: The 8t architecture implements balanced scaling for its Vector Processing Units (VPU). This allows for the simultaneous execution of quantization, softmax, and layernorm operations alongside matrix multiplications. 18

- Native FP4 Support: By introducing native 4-bit floating point (FP4) support, the TPU 8t effectively doubles MXU throughput while maintaining the accuracy of larger models. 6

| Feature | TPU 8t (Sunfish) |

|---|---|

| Primary Workload | Large-scale pre-training |

| Peak Compute (FP4) | Petaflops (Per Chip) |

| HBM Capacity | GB |

| Interconnect | Virgo (3D Torus) 6 |

| Scaling | chips/superpod 18 |

Google TPU 8i: The Inference Specialist

The TPU 8i, co-designed with MediaTek, represents Google’s move to solve the "memory wall" that limits real-time AI agents. 20 7 The chip’s defining feature is its MB of on-chip SRAM—a three-fold increase over the previous generation—which allows the system to host a significantly larger Key-Value (KV) cache entirely on-silicon. 18 2

This is critical for long-context reasoning models, as it eliminates the latency associated with fetching KV blocks from HBM during auto-regressive decoding. 18 Furthermore, the dedicated Collectives Acceleration Engine (CAE) reduces on-chip latency for synchronization steps, facilitating the rapid "chain-of-thought" processing required for agentic workflows. 6 18

| Feature | TPU 8i (Zebrafish) |

|---|---|

| Primary Workload | Sampling and Reasoning |

| HBM Capacity | GB |

| On-Chip SRAM | MB 18 |

| Price-Performance | better than Ironwood 21 |

| Specialized Engine | Collectives Acceleration Engine (CAE) |

NVIDIA Vera Rubin: The Converged Superchip

The NVIDIA Vera Rubin platform, announced as the successor to Blackwell, represents the zenith of high-density, high-flexibility AI compute. The Rubin R100 GPU is built on TSMC’s 2nm process. 14 22 Unlike the TPU 8 family, which is exclusively available via Google Cloud, Rubin is designed for broad deployment across all hyperscalers and on-premise AI factories. 9 23 24

R100 GPU and HBM4 Memory

The Rubin R100 leverages the first implementation of HBM4 memory, delivering an aggregate bandwidth of up to TB/s per GPU. 14 25 This represents a massive leap over the Blackwell generation. For an AI developer, this bandwidth is essential for sustaining the compute pipeline during long-context inference where the model must alternate between compute-heavy attention mechanisms and memory-bound KV cache lookups. 15 16

| Specification | NVIDIA Rubin R100 |

|---|---|

| Memory Type | HBM4 |

| VRAM Capacity | GB 14 |

| Memory Bandwidth | TB/s 15 |

| FP4 Inference | PFLOPS 14 |

| FP4 Training | PFLOPS 14 |

| CPU | Vera (Arm-based Olympus) 16 |

Memory Hierarchy and the "Memory Wall"

The competition in 2026 is largely a struggle to overcome the "memory wall"—the gap between processor speed and the rate at which data can be fetched from memory. 12

HBM4 vs. HBM3e Bandwidth

The NVIDIA Rubin R100’s use of HBM4 provides a clear bandwidth advantage at TB/s per GPU. This is more than triple the bandwidth available on the TPU 8 family. 15 26 In inference scenarios where the model size exceeds the on-chip cache, the HBM4 bandwidth directly translates to higher tokens per second. 1 16

On-Chip SRAM and KV Cache Strategy

Google’s TPU 8i takes a different approach by focusing on the "active working set." The MB of SRAM is sized specifically to host the KV cache for the largest reasoning models at production scale. 18 By keeping the most frequently accessed data on-silicon, the TPU 8i minimizes the idle time of the compute cores during the decoding phase. 18 For an AI developer, this means the TPU 8i may provide superior latency for "agentic" tasks that involve long-context reasoning. 6 27

Economic Analysis: Performance-per-Dollar

For the AI developer, the "best" chip is often the one that provides the most productive compute time per dollar spent. Google’s eighth-generation launch is framed around cost-effectiveness. 28

- TPU 8t: performance increase per dollar compared to Ironwood. 17 19

- TPU 8i: better performance-per-dollar for inference tasks. 18 20

NVIDIA argues that the Rubin platform delivers a significantly lower cost-per-token for inference compared to Blackwell. While the hourly rental rate for a Rubin GPU is higher, NVIDIA’s massive per-chip throughput means the developer needs fewer hours of compute to achieve the same result. 14 29

The Software Ecosystem: Portability vs. Integration

The choice of hardware often dictates the choice of software framework, which in turn affects developer productivity and the ability to move workloads.

CUDA: The Industry Standard

NVIDIA’s "moat" remains the CUDA software ecosystem. Every major frontier AI lab has its toolsets built specifically around NVIDIA's ecosystem. 30 16

JAX and XLA: The Specialized Alternative

Google’s TPUs rely on the XLA (Accelerated Linear Algebra) compiler and frameworks like JAX. 31 32 While JAX is praised for its functional design, porting a CUDA-optimized model to TPU is often described as a "software engineering tax". However, Google is closing this gap with Pallas, an experimental JAX extension that allows developers to write hardware-aware kernels. 33 34

Conclusion

For the AI developer in the agentic era, the choice between Google’s TPU 8 family and NVIDIA’s Vera Rubin platform has shifted to a strict calculation of training and operational economics.

The Google TPU 8t offers a clear advantage in cluster-level capital efficiency for massive training runs. Its vertically integrated stack minimizes hidden synchronization costs and maximizes the return on every megawatt consumed. 17

The NVIDIA Vera Rubin platform remains the preferred choice for developers who prioritize the absolute fastest training throughput per chip and the flexibility to deploy high-density compute across multi-cloud or on-premise environments. 9

Tips and Donations

If you enjoyed this deep dive, consider supporting the project with a tip in Sats. It's a simple, global way to support independent research.

To send Sats, you'll need a lightning wallet.

Research

-

Our eighth generation TPUs: two chips for the agentic era - Google Blog, April 2026. ↩ ↩2 ↩3 ↩4

-

Google Bets On The Agentic AI Era With Its AI Hypercomputer - Wccftech, April 2026. ↩ ↩2 ↩3

-

Wall Street Pro Thinks Google's AI Chip Edge Is Getting Harder to Ignore - 247wallst, April 2026. ↩ ↩2

-

Google may have made it official, tells Nvidia: Yes, we are coming after you with our new... - Times of India, April 2026. ↩

-

Google doesn't pay the Nvidia tax. Its new TPUs explain why. - VentureBeat, April 2026. ↩

-

Google Bolsters AI Hypercomputer with New TPU Chips, Virgo Interconnect, Speedier Lustre - HPCwire, April 2026. ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

Google Unveils Dedicated AI Chips 'TPU 8t & 8i' for Training and Inference - BigGo Finance, April 2026. ↩ ↩2

-

AI infrastructure at Next '26 | Google Cloud Blog, April 2026. ↩

-

Google debuts eighth-gen TPU chips for training and inference with big performance claims - Neowin, April 2026. ↩ ↩2 ↩3

-

Google TPU 8t and TPU 8i: Nvidia AI Chip Rival 2026 - TECHi, April 2026. ↩ ↩2

-

Google dual tracks TPU 8 to conquer training and inference - The Register, April 2026. ↩

-

Google TPU-8 Splits Training and Inference for Agentic Era - byteiota, April 2026. ↩ ↩2

-

The Custom Silicon Inflection Point: Hyperscaler ASICs Challenge NVIDIA's GPU Dominance in 2026 - Introl, April 2026. ↩

-

NVIDIA Rubin R100 GPU | 288GB HBM4, 50 Petaflops | Next-Gen AI Accelerator - SLYD, April 2026. ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

Infrastructure for Scalable AI Reasoning | NVIDIA Vera Rubin Platform, April 2026. ↩ ↩2 ↩3 ↩4

-

Inside the NVIDIA Vera Rubin Platform: Six New Chips, One AI Supercomputer - NVIDIA Developer Blog, April 2026. ↩ ↩2 ↩3 ↩4 ↩5

-

Google Unveils Eighth-Generation TPU Dual-Chip Strategy, Marking a "Watershed" for Training and Inference - BigGo Finance, April 2026. ↩ ↩2 ↩3

-

TPU 8t and TPU 8i technical deep dive | Google Cloud Blog, April 2026. ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9 ↩10 ↩11

-

Google presents TPU 8t and TPU 8i chips; splits training and ... - Techzine, April 2026. ↩ ↩2

-

Google has announced its 8th generation of AI processing chips: TPU 8t and TPU 8i - GIGAZINE, April 2026. ↩ ↩2

-

Google launches Ironwood TPU and previews eighth-gen split into ... - TheNextWeb, April 2026. ↩

-

Vera Rubin GPU, Nvidia CES 2026 : r/accelerate - Reddit, April 2026. ↩

-

Google Cloud AI infrastructure at NVIDIA GTC 2026 - Google Cloud Blog, April 2026. ↩

-

NVIDIA Rubin R100: Specs, Architecture, and GPU Cloud Availability | Spheron Blog, April 2026. ↩

-

Rubin vs Blackwell vs Hopper: NVIDIA GPU Architecture Comparison | Spheron Blog, April 2026. ↩

-

Nvidia reportedly boosts Vera Rubin performance to ward hyperscalers off AMD Instinct AI accelerators - Tom's Hardware, April 2026. ↩

-

Google launches two new TPUs at once! With training and inference - Futu, April 2026. ↩

-

Google bets on workload-specific TPUs with 8t and 8i launch - Network World, April 2026. ↩

-

NVIDIA AI GPU Prices: H100 (40K) & H200 ($315K/8-GPU) Cost Guide - IntuitionLabs, April 2026. ↩

-

AWS and NVIDIA deepen strategic collaboration to accelerate AI from pilot to production - AWS Blog, April 2026. ↩

-

JAX CUDA Optimization Guide: XLA and GPU Acceleration - RightNow AI, April 2026. ↩

-

Accelerating Long-Context Model Training in JAX and XLA | NVIDIA Technical Blog, April 2026. ↩

-

Kade Heckel: Optimizing GPU/TPU code with JAX and Pallas - Open Neuromorphic, April 2026. ↩

-

Pallas Design - JAX documentation, April 2026. ↩